On October 15, 2025, Apple released the M5 system on a chip (SoC), integrating it into the hardware architecture of the Apple Vision Pro. This processor upgrade accelerates artificial intelligence and graphics processing capabilities, establishing a new hardware baseline for enterprise applications and spatial documentation workflows.

M5 System on a Chip (SoC): Hardware Architecture

The M5 SoC utilizes third-generation 3-nanometer architecture, featuring a 10-core GPU with dedicated Neural Accelerators integrated into each core. This configuration executes hardware-accelerated ray tracing and increases overall pixel rendering capacity by 10%, supporting display refresh rates up to 120Hz. The architectural upgrades minimize processing latency for on-device AI tasks and facilitate the real-time rendering of high-density spatial environments.

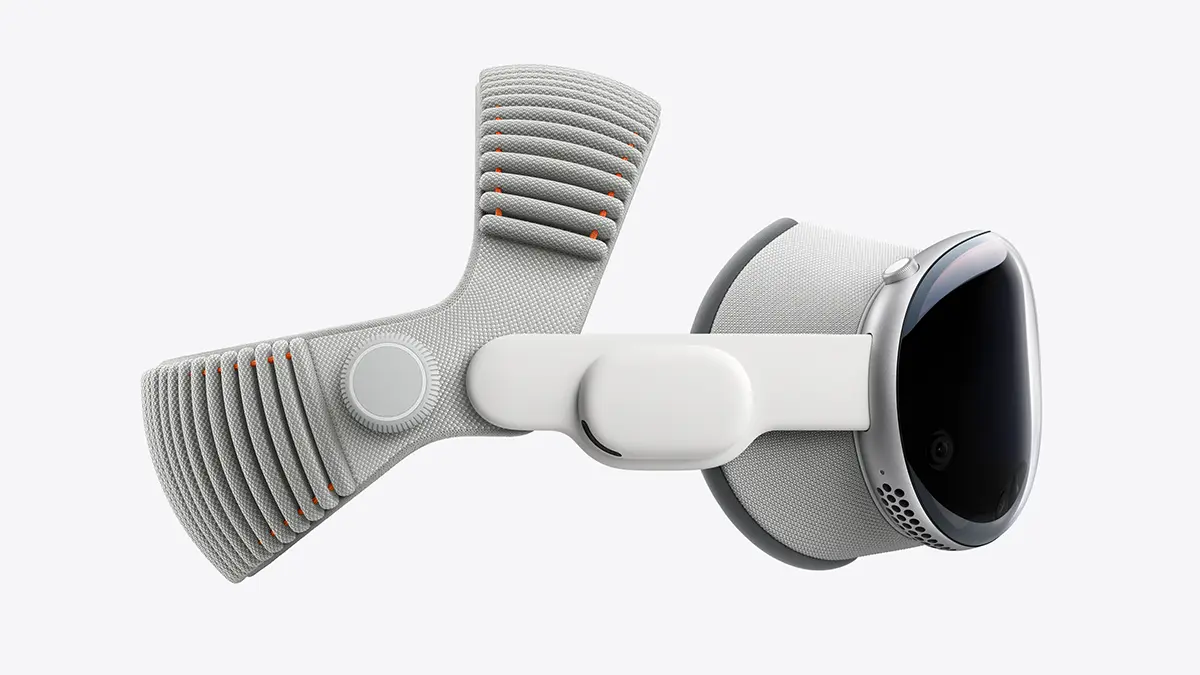

Ergonomic Engineering and Form Factor Specifications

Prolonged utilization of head-mounted displays (HMDs) is traditionally restricted by uneven weight distribution and the potential for vestibular mismatch between perceived and physical movement. These ergonomic limitations impact the operational viability of HMDs in professional deployments.

The Apple Vision Pro hardware addresses cranial load distribution via a Dual Knit Band equipped with an integrated counterweight system. Furthermore, the power supply is isolated into a tethered external battery pack, significantly reducing the mass of the head-mounted unit. The external power supply sustains up to 2.5 hours of standalone operation and supports continuous pass-through charging, ensuring operational continuity for extended enterprise usage.

Market Landscape: Hardware Ecosystem Analysis

The spatial computing hardware sector is currently bifurcated. Apple's hardware strategy with the Vision Pro targets the premium enterprise sector, prioritizing maximum display fidelity and SoC processing capacity. Conversely, Meta's hardware ecosystem, specifically the Quest 3 and 3S units, prioritizes affordability and rapid deployment scaling. Meta's Horizon OS emphasizes multitasking and virtual workspace configuration. Both hardware ecosystems support mixed reality applications, but address different procurement requirements within the enterprise and consumer sectors.

Enterprise Deployment Protocols

High-performance spatial computing hardware supports specialized enterprise workflows. Applications generating 3D digital twins, such as Matterport, utilize the Vision Pro's display fidelity for remote infrastructure inspection directly via the native web browser. This facilitates detailed spatial navigation of physical assets for real estate, construction, and facility management operations.

Additional enterprise deployments include pilot training simulations programmed by CAE and automotive visualization software utilized by Porsche. These applications demonstrate the operational utility and data visualization capabilities provided by blending digital assets with physical environments via the M5 SoC.

The following data points outline historical hardware dependencies and architectural integration protocols.

Legacy Hardware Dependencies: iOS Integration Workflow

Initial spatial documentation protocols utilized Insta360 hardware tethered directly to iOS devices via Lightning connectors, establishing an iOS-centric operational workflow. The subsequent transition to standalone spatial capture equipment (e.g., Matterport Pro2) maintained this architectural dependency. The operational stability of iOS applications, integrated AirDrop file transfer protocols, and the processing capabilities of the iPad for on-site data verification finalized iOS as the standard operating environment for high-volume spatial data processing.

Hardware Infrastructure Baseline: 2020 Ecosystem

Analysis of hardware dependencies during the 2020 procurement cycle demonstrates early integrations of spatial computing architecture.

Processing Prerequisites

The A13 SoC functioned as the baseline processing unit required for stable execution of AR/VR applications and spatial capture processing. Operating the Matterport Pro2 necessitated mobile hardware with corresponding processing and memory specifications.

iPad Pro Implementation

The iPad Pro functioned as the primary on-site hardware for processing and displaying spatial data, including point clouds and mesh floor plans. The integration of early LiDAR sensors established foundational protocols for depth mapping and AR asset deployment.

macOS Ecosystem Integration

Hardware integration within the Apple ecosystem facilitated automated data offloading via iCloud and AirDrop. These native protocols established standard operating procedures for the rapid transfer of high-volume spatial data files between mobile capture units and desktop processing hardware.